Confidence Interval Biostatistics Application

- 1.

Understanding the Essence of Confidence Interval Biostatistics in Everyday Research

- 2.

The Role of Confidence Interval Biostatistics in Scientific Validity

- 3.

Demystifying the 95% Confidence Interval in Biostatistical Contexts

- 4.

How Confidence Interval Biostatistics Shapes Public Health Decisions

- 5.

Common Misinterpretations of Confidence Interval Biostatistics Among Early-Career Researchers

- 6.

Calculating Confidence Interval Biostatistics: From Formula to Interpretation

- 7.

The Interplay Between Sample Size and Confidence Interval Biostatistics Width

- 8.

Confidence Interval Biostatistics vs. Credible Intervals: A Bayesian Glance

- 9.

Reporting Standards for Confidence Interval Biostatistics in Academic Journals

- 10.

Practical Tips for Applying Confidence Interval Biostatistics in Your Own Research

Table of Contents

confidence interval biostatistics

Understanding the Essence of Confidence Interval Biostatistics in Everyday Research

Ever tried guessing how many biscuits are left in the tin without actually counting ’em? That’s kinda what we do in confidence interval biostatistics—except with way more maths and fewer crumbs. In our world as data storytellers, the confidence interval biostatistics isn’t just a fancy term tossed around at conferences over lukewarm tea; it’s the backbone of credible scientific inference. When researchers say, “We’re 95% confident the true effect lies between X and Y,” they’re not wingin’ it—they’re leanin’ on the sturdy scaffolding of confidence interval biostatistics. It gives us a range, not a single point, because let’s be honest: life’s rarely that precise. Whether you're measuring blood pressure responses to a new drug or tracking pollen counts across Manchester, confidence interval biostatistics keeps your conclusions grounded in probability, not prophecy.

The Role of Confidence Interval Biostatistics in Scientific Validity

In the grand theatre of research, confidence interval biostatistics plays the understated but vital role of truth-teller. Unlike p-values—which often get all the spotlight despite being rather moody—the confidence interval biostatistics shows both direction and magnitude of an effect. Fancy saying a treatment “works”? Well, does its confidence interval biostatistics include zero? If yes, mate, you might just be celebrating noise. This nuance is why journals like *The Lancet* now demand confidence intervals alongside any claim of significance. The confidence interval biostatistics doesn’t just whisper “maybe”—it shouts, “Here’s the plausible range, take it or leave it.” And in an era drowning in questionable findings, that honesty is worth its weight in gold (or at least in peer-reviewed citations).

Demystifying the 95% Confidence Interval in Biostatistical Contexts

Right, so what’s all this fuss about 95%? When we talk confidence interval biostatistics at the 95% level, we’re not saying there’s a 95% chance the true value is in that interval—nope, that’s a classic mix-up even seasoned blokes stumble on. What it really means is: if we repeated the same study a hundred times, about 95 of those confidence interval biostatistics would capture the actual population parameter. Think of it like casting a net—you don’t know if *this* cast caught the fish, but you trust the method because it’s worked 95 outta 100 times before. The beauty of confidence interval biostatistics lies in its humility: it admits uncertainty while still offering guidance. And honestly? That’s proper science.

How Confidence Interval Biostatistics Shapes Public Health Decisions

When NHS policymakers decide whether to roll out a new vaccine or tweak screening guidelines, they don’t just glance at averages—they pore over confidence interval biostatistics. Why? Because lives hang in the balance, and narrow margins matter. Suppose a study finds a 12% reduction in heart attacks with a new statin, but the confidence interval biostatistics spans from -3% to +27%. That negative lower bound? Yeah, that means it could *increase* risk for some. Without examining the full confidence interval biostatistics, you’d miss that crucial ambiguity. Public health isn’t about bold headlines—it’s about cautious, evidence-based steps, and confidence interval biostatistics provides the guardrails.

Common Misinterpretations of Confidence Interval Biostatistics Among Early-Career Researchers

Ach, bless ’em—the fresh-faced PhD students who think a 95% confidence interval biostatistics means “95% probability the truth is inside.” We’ve all been there, haven’t we? But here’s the rub: in frequentist stats (which underpins most confidence interval biostatistics), the population parameter is fixed—it’s either in the interval or it ain’t. The 95% refers to the long-run performance of the method, not the probability of this specific interval. Another blunder? Assuming overlapping confidence interval biostatistics between two groups means “no difference.” Not necessarily! You need formal tests for that. These misunderstandings aren’t just academic—they can skew conclusions, waste funding, and muddy the scientific record. So yeah, getting confidence interval biostatistics right matters more than your morning cuppa.

Calculating Confidence Interval Biostatistics: From Formula to Interpretation

Let’s crack open the maths tin, shall we? For a mean, the standard confidence interval biostatistics formula is: sample mean ± (critical value × standard error). The critical value? That’s your z- or t-score, depending on sample size and whether you know the population SD. Say you’ve got a sample of 50 patients with average cholesterol at 5.2 mmol/L (SD = 0.8). Your standard error is 0.8/√50 ≈ 0.113. For 95% CI, t* ≈ 2.01 (from t-table), so margin of error = 2.01 × 0.113 ≈ 0.227. Thus, confidence interval biostatistics = 5.2 ± 0.227 → (4.97, 5.43). Now, interpreting that: we’re 95% confident the true population mean falls within this band. Note the wording—“confident,” not “probable.” Precision in language mirrors precision in confidence interval biostatistics.

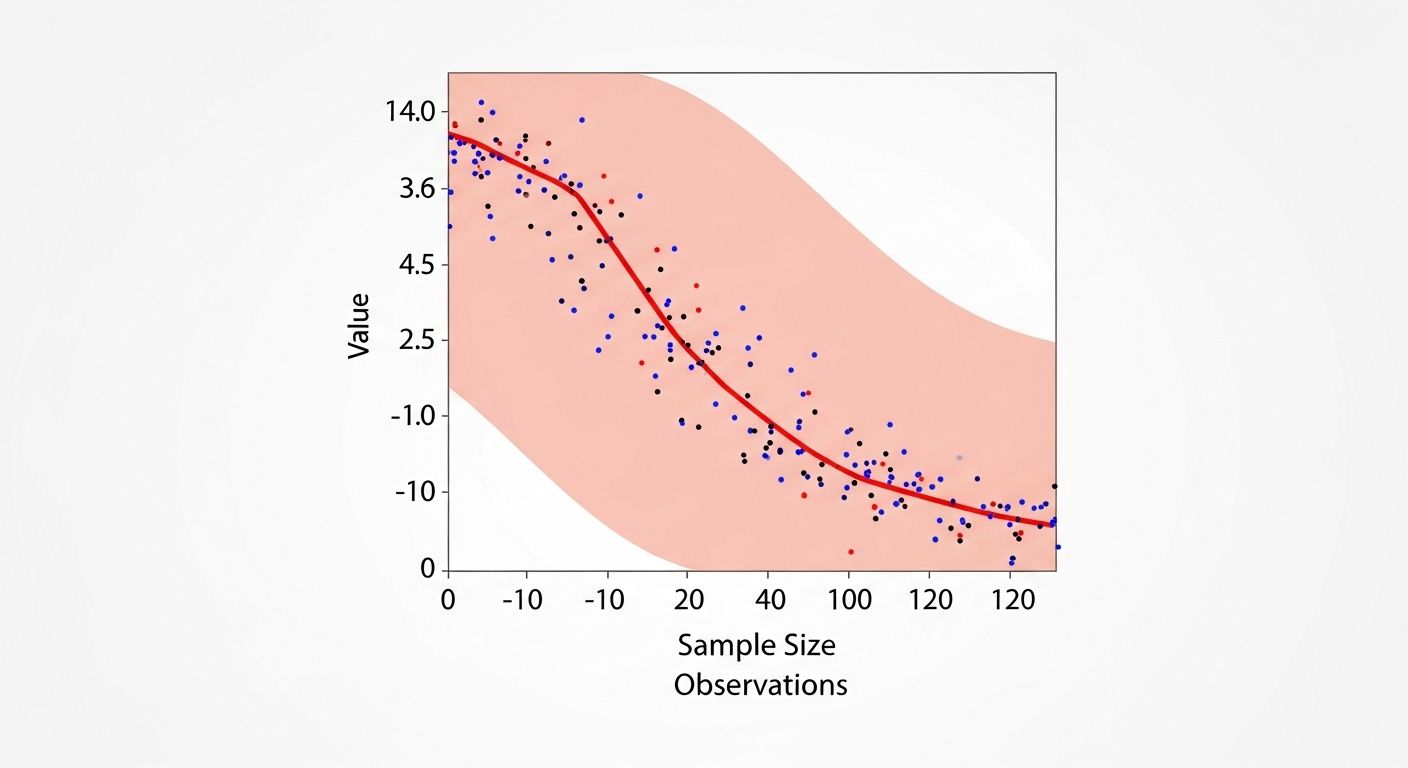

The Interplay Between Sample Size and Confidence Interval Biostatistics Width

Ever noticed how bigger samples give tighter confidence interval biostatistics? That’s no coincidence—it’s maths doing its quiet magic. The standard error shrinks as √n grows, so doubling your sample doesn’t halve the interval width; it reduces it by about 30%. Here’s a quick table to illustrate:

| Sample Size (n) | Standard Error | 95% CI Width (approx.) |

|---|---|---|

| 25 | 0.20 | 0.78 |

| 100 | 0.10 | 0.39 |

| 400 | 0.05 | 0.20 |

As you can see, the confidence interval biostatistics narrows dramatically with larger n. But beware—bigger isn’t always better if your sampling’s biased. A massive but skewed sample gives a precise yet wrong confidence interval biostatistics. Garbage in, gospel out? Nah, mate. Quality trumps quantity every time in confidence interval biostatistics.

Confidence Interval Biostatistics vs. Credible Intervals: A Bayesian Glance

Now, don’t get us started on Bayesian stats—but since you asked… In frequentist land, confidence interval biostatistics is about procedure reliability. In Bayesian territory, you get *credible intervals*, which *do* allow statements like “95% probability the parameter is in this range.” The difference? Priors. Bayesians bake in prior knowledge; frequentists start blank. Both have merits, but in most medical journals, confidence interval biostatistics remains king. Still, the rise of Bayesian methods in adaptive trials shows the field’s evolving. One day, maybe they’ll hold hands. Until then, know your framework—mixing them up in a paper’s like wearin’ socks with sandals: technically possible, but frowned upon.

Reporting Standards for Confidence Interval Biostatistics in Academic Journals

If you’re submitting to *BMJ* or *Nature*, skip the p-value-only nonsense. CONSORT and STROBE guidelines now insist on reporting confidence interval biostatistics for all key estimates. Editors want to see: point estimate, confidence interval biostatistics, and confidence level (usually 95%). Bonus points if you plot them in forest plots or caterpillar graphs. Why? Because a hazard ratio of 0.85 looks impressive—until you spot its confidence interval biostatistics stretches from 0.60 to 1.20. Suddenly, it’s less “breakthrough” and more “meh.” Transparent reporting via confidence interval biostatistics builds trust, reduces hype, and—dare we say—makes science *better*.

Practical Tips for Applying Confidence Interval Biostatistics in Your Own Research

So you’re knee-deep in data and wondering how to wield confidence interval biostatistics like a pro? First, always report them—not just p-values. Second, interpret them contextually: a narrow confidence interval biostatistics around a trivial effect isn’t useful, and a wide one around a large effect might still be meaningful. Third, use software wisely—R, Python, or even Excel can compute them, but understand what’s under the bonnet. And finally, when presenting, visualise! A bar chart with error bars showing confidence interval biostatistics tells a richer story than a table of numbers. For more on foundational concepts, check out Jennifer M Jones, explore our Fields section, or dive into our detailed piece on confidence-level-in-statistics-meaning. Remember: good science isn’t about certainty—it’s about honest uncertainty, and confidence interval biostatistics is your best mate for that.

Frequently Asked Questions

What is confidence interval in biostatistics?

In biostatistics, a confidence interval is a range of values, derived from sample data, that is likely to contain the true population parameter with a specified level of confidence—commonly 95%. It reflects the precision and uncertainty of an estimate, such as a mean difference or odds ratio, and is fundamental to inferential statistics in health and life sciences.

How is CI used in research?

Researchers use confidence interval biostatistics to assess the reliability and clinical relevance of their findings. Rather than relying solely on p-values, scientists examine whether the interval includes null values (e.g., zero for differences, one for ratios) and consider the magnitude of the effect. This approach supports more nuanced interpretation and better informs decision-making in fields like epidemiology, pharmacology, and public health.

How to explain a 95% confidence interval?

A 95% confidence interval biostatistics means that if the same study were repeated many times under identical conditions, approximately 95% of the calculated intervals would contain the true population parameter. It does *not* mean there’s a 95% probability that the specific interval from your study contains the truth—since in frequentist statistics, the parameter is fixed, not random.

What does a 95% confidence level mean in scientific reporting?

In scientific reporting, a 95% confidence level indicates the degree of certainty associated with the estimation method. It assures readers that the procedure used to generate the confidence interval biostatistics has a 95% success rate over repeated sampling. This standard balances rigor and practicality, allowing researchers to communicate uncertainty transparently while maintaining statistical credibility.

References

- https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1122601/

- https://academic.oup.com/ije/article/35/5/1115/735527

- https://www.bmj.com/content/343/bmj.d2090

- https://onlinelibrary.wiley.com/doi/full/10.1002/sim.2682